What is an ETL Pipeline? - Definition & Meaning

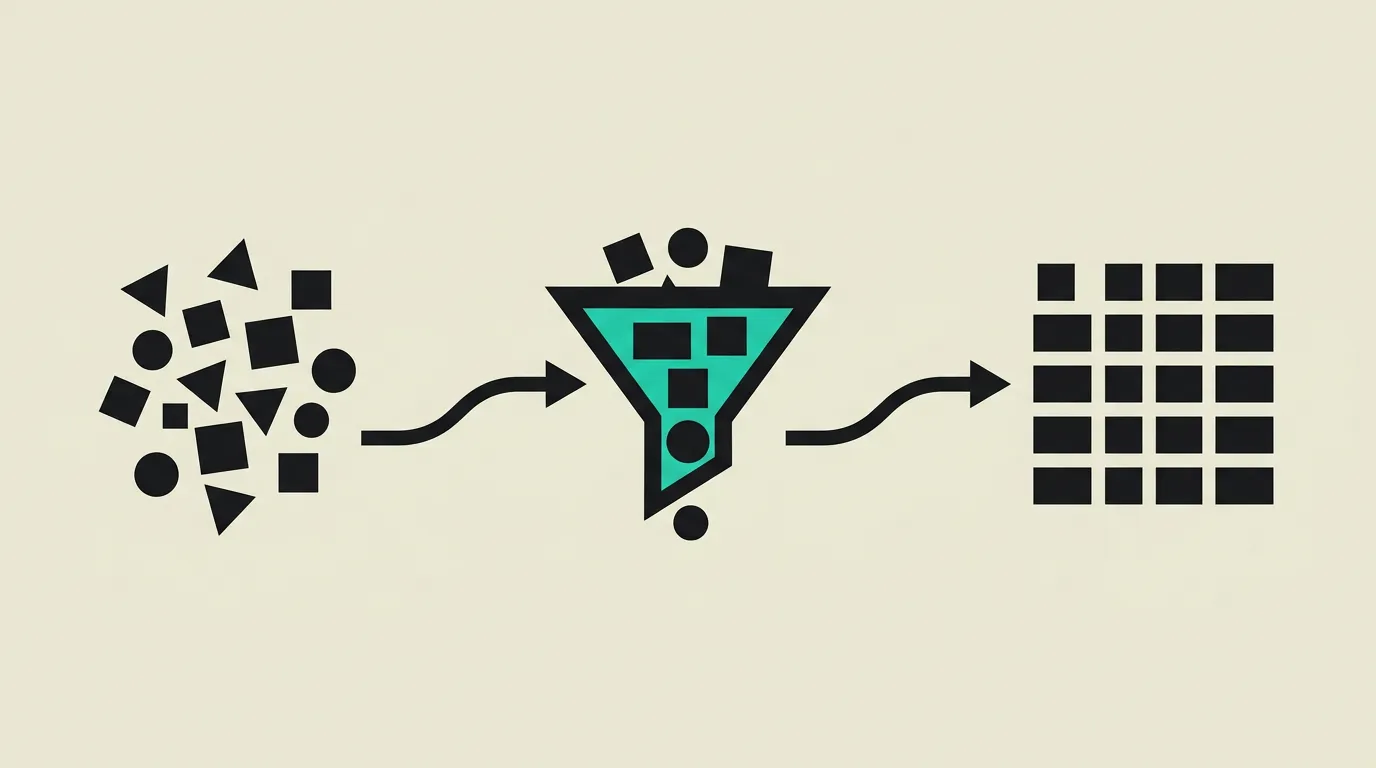

ETL pipelines extract data from sources, transform it into a uniform format, and load it into a warehouse. They are the backbone of data engineering.

An ETL pipeline (Extract, Transform, Load) is an automated process that extracts data from diverse sources, transforms it into a uniform and analysis-ready format, and loads it into a target system such as a data warehouse. It forms the backbone of data engineering and enables organizations to consolidate fragmented data into reliable reports and analyses that the entire business can use for decision making.

What is ETL Pipeline?

An ETL pipeline (Extract, Transform, Load) is an automated process that extracts data from diverse sources, transforms it into a uniform and analysis-ready format, and loads it into a target system such as a data warehouse. It forms the backbone of data engineering and enables organizations to consolidate fragmented data into reliable reports and analyses that the entire business can use for decision making.

How does ETL Pipeline work technically?

The ETL process consists of three distinct phases. Extract retrieves raw data from diverse sources: relational databases (PostgreSQL, MySQL), APIs (REST, GraphQL), file systems (CSV, Parquet on S3), SaaS applications (Salesforce, HubSpot, Stripe), and event streams (Kafka, Pub/Sub). Transform applies cleaning, normalization, aggregation, deduplication, and business logic to convert raw data into an analysis-ready format. This includes joining records from different sources, converting data types, applying currency conversions, and computing derived metrics like customer lifetime value. Load writes the transformed data to the target system, typically a data warehouse such as BigQuery or Snowflake. ELT (Extract, Load, Transform) is the modern variant where raw data is first loaded into the data warehouse and transformations happen there using SQL, leveraging the warehouse's own compute power. Apache Airflow is the standard orchestrator: DAGs (Directed Acyclic Graphs) define task dependencies with scheduling, retries, SLA monitoring, and alerting via Slack or PagerDuty. dbt (data build tool) focuses on the Transform step with version-controlled SQL models that are automatically tested for schema correctness, unique keys, and not-null constraints. Fivetran and Airbyte automate the Extract and Load steps with hundreds of pre-built connectors for popular data sources. Idempotent pipelines guarantee that repeated runs produce the same result by using upserts or merge statements instead of blind inserts. Data quality frameworks like Great Expectations or dbt tests validate data for completeness, uniqueness, consistency, and referential integrity before downstream processes consume them. Incremental loading strategies process only new or changed records based on an updated_at timestamp, a Change Data Capture (CDC) stream from Debezium, or a high-watermark column, which reduces compute costs and warehouse processing time compared to full-refresh loads that reload entire tables on every run. Data lineage tools such as dbt's built-in documentation generator, OpenLineage, and DataHub track how data flows from source to final dashboard, making it possible to assess the impact of upstream schema changes before they propagate downstream. Freshness monitoring alerts stakeholders when source tables have not been updated within the expected window, preventing decisions based on stale data. Slowly Changing Dimensions (SCD) strategies, particularly SCD Type 2 with effective date ranges, preserve historical state in dimension tables so analysts can reconstruct the state of the business at any past point in time.

How does MG Software apply ETL Pipeline in practice?

MG Software builds ETL and ELT pipelines for clients who need to consolidate data from multiple sources into a central analytics environment. We use Apache Airflow for orchestrating complex DAGs with dependencies, retries, and SLA monitoring, and dbt for modeling and testing SQL transformations with full version control in Git. For extraction, we configure Airbyte connectors or write custom Python extractors when standard connectors are not available for proprietary or legacy data sources. Every pipeline is designed to be idempotent so reruns are safe and never produce duplicate data. We implement freshness checks that alert via Slack when source tables are stale, and dbt tests validate schema correctness and business rules before data reaches downstream dashboards. Pipelines run on automated schedules, and we use dbt's built-in documentation to generate lineage graphs so the client team can trace every metric back to its source. This enables our clients to have reliable, up-to-date, and tested data in their warehouse, reducing time-to-insight and eliminating the manual CSV exports that previously consumed hours each week.

Why does ETL Pipeline matter?

Without structured data pipelines, valuable data remains locked inside separate systems. Marketing looks at Google Analytics, finance at the accounting system, and sales at the CRM, but nobody has the complete picture. ETL pipelines bring this data together in a reliable, tested, and automated process. Manual data exports consume hours per week and are error-prone. Automated pipelines run unattended and deliver consistent results regardless of team availability. Time-to-insight drops from days to hours or even minutes, enabling the organization to respond faster to changes in market conditions or customer behavior. This allows the entire organization to work with the same KPI definitions, eliminates manual CSV exports and spreadsheet gymnastics, and gives decision makers timely insights instead of stale reports that take days to compile.

Common mistakes with ETL Pipeline

Non-idempotent runs duplicate rows on restart or leave half-loaded tables in the warehouse. Source schemas change without a contract (schema drift) and silently break nightly jobs, causing dashboards to show incorrect numbers for weeks. Locally tested transformations fail in production due to timezone differences, character encoding, or locale settings. Bad records vanish without a dead-letter queue, making data loss invisible. Everything goes to reporting as raw ELT with untested ad-hoc SQL, so every department reports different revenue figures. Lineage is undocumented, so nobody knows which dashboards break when upstream changes are made. Pipelines are built without freshness alerting, so stakeholders work with stale data for days without realizing it.

What are some examples of ETL Pipeline?

- A marketing team building an ETL pipeline to combine daily data from Google Analytics, Facebook Ads, LinkedIn Ads, and their HubSpot CRM into BigQuery for an integrated marketing dashboard that computes channel attribution and cost per acquisition.

- A fintech company using Airflow to extract transaction data nightly from multiple payment providers (Stripe, Mollie, Adyen), normalize it into a unified transaction model, and load it into Snowflake for compliance reporting and fraud detection.

- An e-commerce platform using dbt models to transform raw order data into aggregated revenue metrics by product category, region, and time period, with automated tests verifying that totals match the source data.

- A SaaS company using Airbyte to load daily product usage data from their PostgreSQL database and Segment events into BigQuery, where dbt models compute churn risk scores, feature adoption, and cohort analyses.

- A healthcare organization running nightly ETL jobs to combine patient outcomes from three different hospital systems into standardized quality reports for regulatory compliance audits.

Related terms

Frequently asked questions

We work with this every day

The same expertise you are reading about, we put to work for clients across Europe.

See what we doRelated articles

What is Data Engineering? - Explanation & Meaning

Data engineering designs and builds the pipelines and infrastructure that transform raw data into actionable insights for analytics and AI applications.

Data Migration Examples - Safe Transitions to New Systems

Migrate 2M+ records with zero downtime. Data migration examples covering legacy ERP to cloud, database mergers, and e-commerce re-platforming with SEO intact.

What Is an API? How Application Programming Interfaces Power Modern Software

APIs enable software applications to communicate through standardized protocols and endpoints, powering everything from payment processing and CRM integrations to real-time data exchange between microservices.

What Is SaaS? Software as a Service Explained for Business Leaders and Teams

SaaS (Software as a Service) delivers applications through the cloud on a subscription basis. No installations, automatic updates, elastic scalability, and secure access from any device make it the dominant software delivery model for modern organizations.