What is gRPC? - Definition & Meaning

gRPC uses Protocol Buffers for binary, typed communication between microservices, often up to ten times faster than REST for internal service-to-service calls.

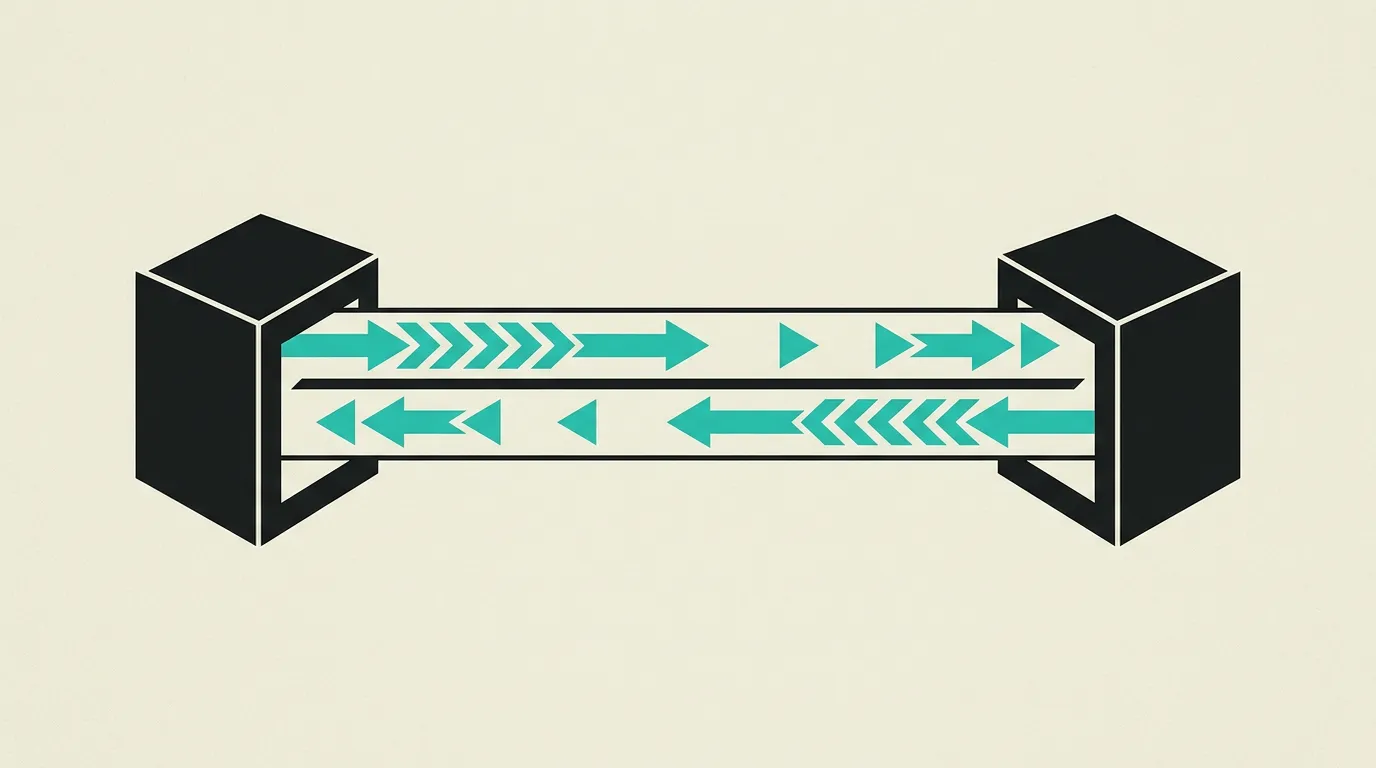

gRPC is an open-source Remote Procedure Call framework created at Google that uses HTTP/2 for transport and Protocol Buffers for binary serialization. It enables strongly-typed, language-agnostic communication between services with automatic client and server code generation for over a dozen programming languages. gRPC natively supports four communication patterns: unary calls, server streaming, client streaming, and bidirectional streaming.

What is gRPC?

gRPC is an open-source Remote Procedure Call framework created at Google that uses HTTP/2 for transport and Protocol Buffers for binary serialization. It enables strongly-typed, language-agnostic communication between services with automatic client and server code generation for over a dozen programming languages. gRPC natively supports four communication patterns: unary calls, server streaming, client streaming, and bidirectional streaming.

How does gRPC work technically?

gRPC runs on HTTP/2, which provides multiplexed streams over a single TCP connection, HPACK header compression, and per-stream flow control. A single gRPC channel can carry hundreds of concurrent RPCs without the head-of-line blocking that limits HTTP/1.1 connections. Protocol Buffers define service contracts in .proto files that compile into client stubs and server interfaces for Go, Java, Python, C++, TypeScript, Rust, and other languages. Protobuf encodes data in a compact binary wire format where field names are replaced by integer tags and values use varint encoding, producing payloads that are typically much smaller and faster to parse than equivalent JSON. Service definitions are versioned through field numbers: adding a field with a new number is backward-compatible because old clients ignore unknown fields, while removing a field requires reserving its number to prevent accidental reuse. The four RPC patterns cover most distributed scenarios. Unary RPCs work like a function call with one request and one response. Server streaming returns a sequence of messages, useful for paginated results or event feeds. Client streaming sends a batch before the server replies, fitting file uploads or telemetry ingestion. Bidirectional streaming opens a full-duplex channel for interactive protocols like chat or collaborative editing. Deadlines propagate through the call chain: if Service A calls Service B with a 500ms deadline, Service B sees the remaining budget and can abort early instead of wasting work. Interceptors attach cross-cutting concerns such as authentication tokens, distributed tracing headers (OpenTelemetry), and request logging without touching business logic. Compared to REST, gRPC trades human-readability and browser-native support for strong contracts, code generation, and lower serialization cost. Compared to GraphQL, gRPC offers fixed schemas and native streaming but lacks the flexible query model that lets frontends request exactly the fields they need. In practice, many architectures use gRPC internally between services and expose REST or GraphQL at the edge for external consumers. Load balancing requires attention because HTTP/2 connections are long-lived: client-side balancing via a service registry (Consul, etcd) or a sidecar proxy (Envoy, Linkerd) distributes individual RPCs across backends. The built-in health checking protocol integrates with Kubernetes readiness probes, and gRPC reflection allows tools like grpcurl and Postman to discover services at runtime without precompiled stubs. HTTP/2 flow control operates at two levels, per stream and per connection, so a slow consumer does not block other streams sharing the same connection. Keepalive pings maintain connections and detect dead connections before they silently fail, which is critical in cloud environments where load balancers close idle connections after a timeout. gRPC channelz provides runtime diagnostics about active channels, connection statistics, and error counts via a debug endpoint. Protobuf oneof fields model polymorphic messages without inheritance, which is useful in event-driven architectures where a single message type can contain multiple variants. Service mesh integration with Istio or Linkerd adds mTLS, traffic management, and observability to gRPC traffic without modifying application code.

How does MG Software apply gRPC in practice?

MG Software uses gRPC for internal service-to-service communication in microservice projects where latency and throughput requirements rule out REST overhead. We store .proto files in a shared repository, generate TypeScript and Go client code from them, and run Buf linting in CI to catch breaking schema changes before they reach production. Keepalive parameters are tuned to match the cloud environment's load balancer timeouts to prevent silent connection failures. We automatically generate API documentation from .proto files using protoc-gen-doc and publish it to our internal developer portal for all team members and stakeholders. For browser-facing clients we proxy through Envoy with gRPC-Web. Public-facing APIs remain REST or GraphQL because external consumers expect familiar tooling and human-readable payloads.

Why does gRPC matter?

In distributed systems, network calls between services dominate end-to-end latency. gRPC reduces serialization cost and connection overhead, and that difference compounds across every hop in a request chain. In complex microservice architectures with dozens of services, every millisecond saved per hop compounds across the full request chain. A typical request passing through five services saves 50 to 100 milliseconds with gRPC compared to REST with JSON. The strict contracts enforced by Protocol Buffers prevent integration bugs that with REST often surface only in production. Strong typing and code generation also catch integration bugs at compile time rather than in production, which becomes increasingly valuable as the number of services grows.

Common mistakes with gRPC

Exposing protobuf endpoints directly to browsers without a gRPC-Web proxy, then debugging silent failures. Shipping .proto changes without versioning field numbers, which can silently corrupt data for older clients. Ignoring deadline propagation so downstream services keep working long after the caller has timed out. Adopting gRPC for a public API consumed by third-party developers who lack protobuf tooling. Running long-lived streams without connection management or load-balancing awareness, causing uneven traffic distribution across backends. Keepalive settings are left at defaults so load balancers close connections and clients receive cryptic errors. Protobuf messages grow unchecked because teams never clean up or deprecate fields, resulting in confusing API contracts that are difficult to understand and maintain.

What are some examples of gRPC?

- A payment platform using gRPC for communication between the order service and payment service, with bidirectional streaming for real-time transaction status updates.

- A machine learning platform leveraging gRPC to distribute model inference requests to GPU servers, where protobuf encoding eliminates the JSON serialization bottleneck on high-throughput prediction endpoints.

- A gaming backend using gRPC server streaming to push real-time game state updates to all connected players with sub-frame latency.

- A ride-sharing service using bidirectional gRPC streaming between driver apps and the dispatch service to exchange location updates and trip assignments with round-trip times under 50 milliseconds.

- An observability pipeline where agents on thousands of servers stream metrics and traces to a central collector over gRPC, handling millions of spans per minute with minimal CPU overhead thanks to protobuf encoding.

Related terms

Frequently asked questions

We work with this every day

The same expertise you are reading about, we put to work for clients across Europe.

See what we doRelated articles

Microservices Architecture: Definition, Patterns, and When to Use Them in Practice

Microservices break complex applications into small, independent services that are developed, tested, and scaled separately. Discover when a microservice architecture adds value and how to avoid the pitfalls of distributed systems.

WebAssembly Explained: Running Native Code in Your Browser

WebAssembly (Wasm) compiles C++, Rust, and Go code to run in the browser at near-native speed. Learn how Wasm works, when to use it, and what it enables.

What is Static Site Generation? - Explanation & Meaning

Static Site Generation builds HTML pages at build time and serves them via CDN, making it the fastest and most secure approach to delivering web content.

Monolith vs Microservices: Start Simple or Scale from Day One?

Start monolithic and split when needed - or go microservices from day one? The architecture choice that shapes your scalability and team structure.