What is a Message Queue? - Definition & Meaning

Message queues decouple system components through async communication, often using RabbitMQ and Kafka for reliable, scalable data processing.

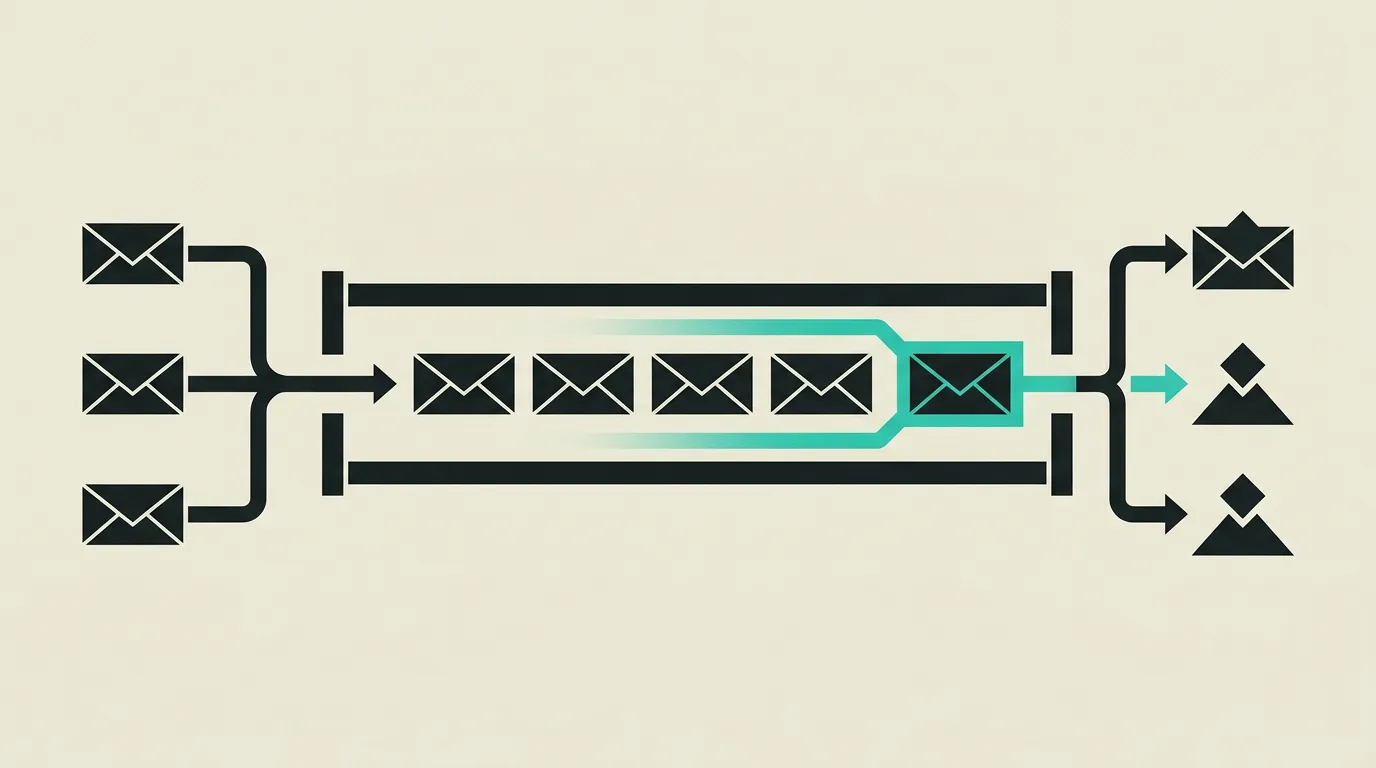

A message queue is a communication mechanism where messages are temporarily stored in a durable queue until the receiver can process them. This decouples the sender (producer) from the receiver (consumer) and enables asynchronous processing where both parties can operate at their own pace. Message queues are a core building block of distributed systems and event-driven architectures, providing reliable, guaranteed communication between loosely coupled application components without requiring both parties to be available at the same time.

What is Message Queue?

A message queue is a communication mechanism where messages are temporarily stored in a durable queue until the receiver can process them. This decouples the sender (producer) from the receiver (consumer) and enables asynchronous processing where both parties can operate at their own pace. Message queues are a core building block of distributed systems and event-driven architectures, providing reliable, guaranteed communication between loosely coupled application components without requiring both parties to be available at the same time.

How does Message Queue work technically?

Message queues implement the producer-consumer pattern: producers send messages to a queue, and consumers retrieve and process them independently. RabbitMQ is a widely used AMQP broker supporting exchanges, bindings, and routing keys for complex routing patterns including direct, topic, fanout, and headers exchange. RabbitMQ provides acknowledgment mechanisms where consumers confirm successful processing before a message is removed from the queue, preventing data loss during crashes. Apache Kafka is a distributed streaming platform that stores messages in ordered, immutable logs (partitions). Kafka provides high throughput (millions of messages per second), persistence, and replay capabilities, making it ideal for event sourcing and audit trails. Consumer groups in Kafka automatically distribute processing across multiple consumers for horizontal scalability. Dead letter queues (DLQ) capture messages that cannot be successfully processed after a configurable number of attempts. Idempotency patterns, such as including a unique message ID, prevent duplicate processing during retries. Backpressure mechanisms protect consumers from overload by regulating production speed. Cloud-native alternatives like AWS SQS, Google Cloud Pub/Sub, and Azure Service Bus offer managed queueing without operational overhead. Event-driven architectures use message queues to decouple services: each service publishes events and reacts to events from other services, resulting in loose coupling, improved scalability, and independent deployment cycles. Message serialization using Avro or Protocol Buffers combined with a Schema Registry (such as Confluent Schema Registry for Kafka) ensures backward and forward compatibility as message formats evolve, allowing producers and consumers to be updated independently. Kafka supports exactly-once delivery semantics since version 0.11 through idempotent producers and transactional consumers, eliminating duplicate processing at the broker level. Message ordering is guaranteed within a single Kafka partition; global ordering requires a single-partition topic, which caps throughput. RabbitMQ supports priority queues that process higher-urgency messages first, and quorum queues that provide Raft-consensus-based replication for stronger durability guarantees during cluster failures. Message TTL at both the queue and individual message level prevents stale messages from consuming resources indefinitely.

How does MG Software apply Message Queue in practice?

At MG Software, we use message queues for decoupling time-consuming tasks like email delivery via Resend, PDF generation, and payment processing. In microservice architectures, we deploy RabbitMQ or cloud-native alternatives like AWS SQS so services can scale independently. Every queue consumer is designed to be idempotent and includes retry logic with exponential backoff. We monitor queue depth and consumer lag through dashboards and alerts, so issues are flagged before they impact end users. This guarantees that client applications remain responsive even during peak load while background tasks are processed reliably. Each message carries a correlation ID so we can trace the full processing chain end-to-end through our observability stack. Dead letter queues have dedicated alerting configured, and we build admin tooling that lets our team inspect failed messages, identify root causes, and replay them back into the original queue with a single action once the underlying issue is resolved.

Why does Message Queue matter?

Without message queues, application components are tightly coupled: if a downstream service is slow or fails, the user feels it immediately. A queue absorbs spikes, guarantees that no message is lost, and makes it possible to deploy and scale services independently. For businesses, this means higher availability, a better user experience during peak load, and the flexibility to build new features without disrupting existing systems. On the cost side, queues reduce infrastructure spending because workloads are spread over time rather than requiring servers provisioned for peak capacity that sit idle most of the day. Development teams ship faster because services are released independently of one another, shortening time-to-market for new features and reducing the blast radius of each deployment.

Common mistakes with Message Queue

Messages are acknowledged before processing completes, so a crash silently loses the data with no dead letter or retry. Teams treat the broker as a database and discover too late that messages expire or are not searchable. Without idempotent consumers, retries produce duplicate orders or charges. Monitoring on consumer lag is absent, so outages look like silence until customers complain. Backpressure is not configured and producers flood the queue until memory is exhausted. Message schemas are changed without versioning, causing existing consumers to crash on payloads they can no longer deserialize. Poison messages, payloads that are structurally unprocessable, block the entire queue when there is no mechanism to move them to a DLQ after repeated failures.

What are some examples of Message Queue?

- An online store that instantly confirms orders to the customer in the browser while inventory checks, payment processing, and email confirmations happen asynchronously through a message queue, eliminating user-facing wait times and ensuring checkout conversion does not drop due to slow downstream services.

- A notification service receiving events from various microservices via Kafka and batching them into push notifications, emails, and in-app messages for mobile users, with deduplication to prevent duplicate alerts and priority sorting that delivers urgent messages first.

- A data pipeline distributing raw log files via RabbitMQ to multiple workers that transform, validate, and store the data in a data warehouse in parallel, where each worker can independently fail and restart without impacting the rest of the pipeline.

- A video platform placing uploaded material into a queue for transcoding into multiple resolutions, where each transcoding task is independently processed by workers that scale horizontally with upload volume.

- An IoT system streaming sensor data from thousands of devices via MQTT to a Kafka cluster, where real-time analytics run while raw data is simultaneously archived for historical reporting.

Related terms

Frequently asked questions

We work with this every day

The same expertise you are reading about, we put to work for clients across Europe.

See what we doRelated articles

Event-Driven Architecture Explained: Patterns, Tools and Practical Trade-offs

Event-driven architecture lets systems communicate through asynchronous events instead of direct API calls. Learn the core patterns (event notification, event sourcing, CQRS), when to choose Kafka versus RabbitMQ, and how to handle eventual consistency in production.

Kafka vs RabbitMQ: Complete Comparison for Event-Driven Architecture

Kafka handles massive event streams while RabbitMQ excels at complex message routing. The right message broker depends on your data volumes and patterns.

Microservices Architecture: Definition, Patterns, and When to Use Them in Practice

Microservices break complex applications into small, independent services that are developed, tested, and scaled separately. Discover when a microservice architecture adds value and how to avoid the pitfalls of distributed systems.

What is gRPC? - Definition & Meaning

gRPC uses Protocol Buffers for binary, typed communication between microservices, often up to ten times faster than REST for internal service-to-service calls.