What is MLOps? - Explanation & Meaning

MLOps manages the full ML model lifecycle in production: from training and deployment to monitoring, versioning, and automated retraining pipelines.

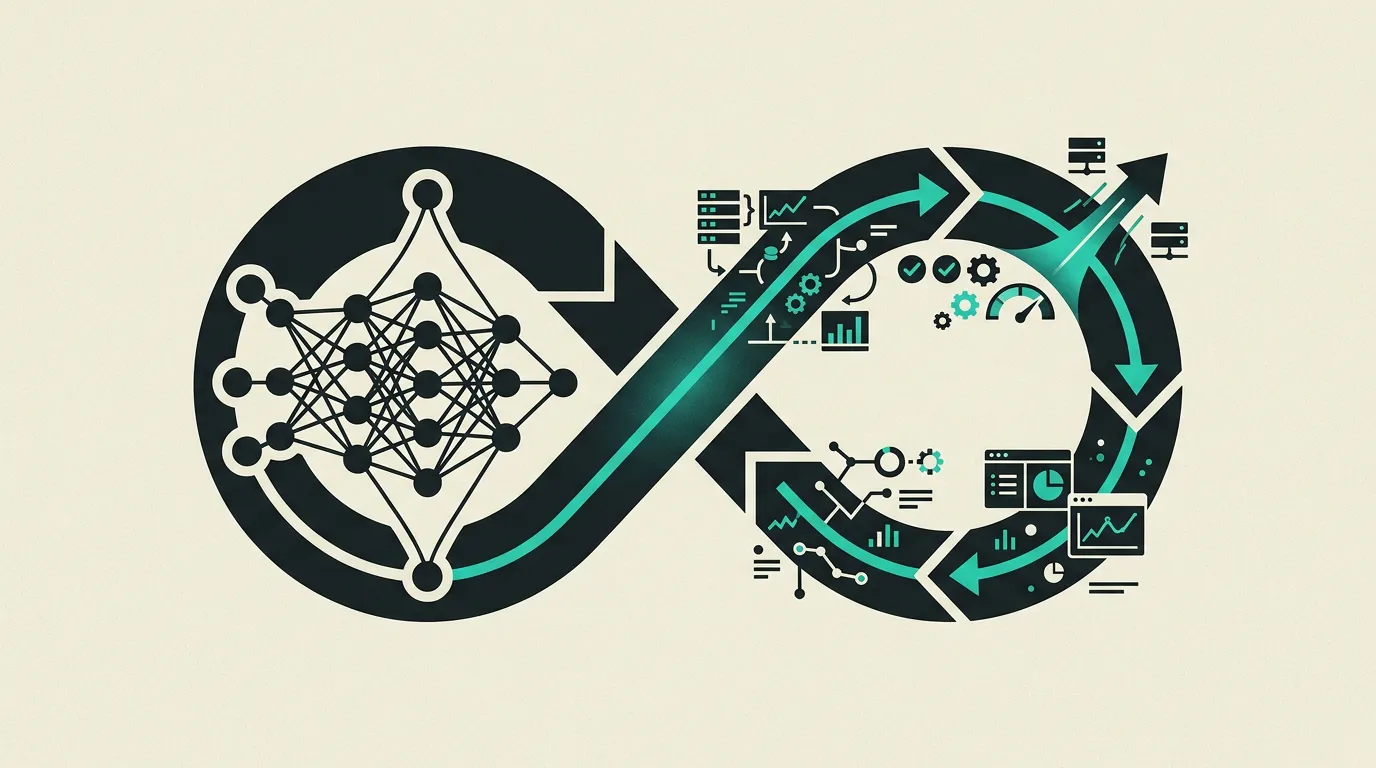

MLOps (Machine Learning Operations) brings together software engineering best practices with the unique demands of machine learning to manage the full lifecycle of ML models. It encompasses the structured development, training, validation, deployment, monitoring, and maintenance of models in production environments. Rather than treating ML as a one-off experiment, MLOps establishes repeatable, automated workflows that ensure models perform reliably at scale with continuous quality assurance and rapid iteration cycles.

What is MLOps?

MLOps (Machine Learning Operations) brings together software engineering best practices with the unique demands of machine learning to manage the full lifecycle of ML models. It encompasses the structured development, training, validation, deployment, monitoring, and maintenance of models in production environments. Rather than treating ML as a one-off experiment, MLOps establishes repeatable, automated workflows that ensure models perform reliably at scale with continuous quality assurance and rapid iteration cycles.

How does MLOps work technically?

At its core, MLOps extends DevOps to handle challenges unique to machine learning: data versioning, experiment reproducibility, model drift, and the interplay between code, data, and model artifacts. A mature MLOps pipeline spans several stages. It begins with data ingestion and validation, ensuring incoming data meets schema and quality requirements. Feature engineering follows, typically backed by a feature store such as Feast or Tecton that provides consistent feature computation across training and serving. Model training incorporates hyperparameter optimization via tools like Optuna or Ray Tune, followed by evaluation against baseline metrics and business KPIs. The model registry, offered by platforms like MLflow or Weights & Biases, tracks model versions alongside their training metadata, enabling rollback and auditability. Deployment automation supports canary releases, blue-green switching, and shadow deployments to minimize production risk. Seldon Core, BentoML, and TorchServe handle model serving across different frameworks and hardware. Data and model versioning through DVC or LakeFS ensures every experiment is fully reproducible. This is critical for debugging production issues and satisfying regulatory requirements in sectors like finance and healthcare. The rise of LLMOps in 2026 has introduced distinct operational concerns. Prompt management, retrieval-augmented generation pipeline tuning, token cost attribution, and output quality evaluation through frameworks like RAGAS and DeepEval represent new categories of operational work. Guardrails for content safety and hallucination detection are now standard requirements. Continuous integration for ML goes beyond code testing. Automated pipelines validate data quality, check for feature schema changes, run model evaluation suites, and verify that inference latency stays within service-level objectives. Observability platforms such as Arize AI, WhyLabs, and Fiddler provide continuous monitoring, detect drift, and trigger automated retraining when predefined thresholds are breached.

How does MG Software apply MLOps in practice?

At MG Software, we embed MLOps practices into every AI solution we ship to production. Our workflow starts with experiment tracking through MLflow, capturing every training run with its parameters, metrics, and artifacts so that any result can be reproduced months later. We build automated training pipelines that trigger on data updates or performance degradation, ensuring models stay current without manual intervention. Real-time monitoring dashboards track precision, recall, latency, and throughput per model endpoint. Automatic alerting notifies our engineers when data drift is detected, allowing proactive intervention before users notice any quality drop. For LLM-powered features, we additionally monitor response quality, hallucination rates, and per-request token costs. Every new model version passes through automated evaluation against golden test sets and business metrics before reaching production traffic.

Why does MLOps matter?

Without MLOps, ML models often get stuck in the experimentation phase and never reach production. MLOps bridges the gap between data science and production engineering, enabling models to be deployed reliably, scalably, and maintainably. In practice, models running without monitoring degrade quickly as input data shifts relative to the training distribution. Automated retraining pipelines and drift detection prevent outdated models from silently delivering poor predictions to end users. For organizations operating multiple models in production, MLOps is the difference between a manageable portfolio and an uncontrollable collection of disconnected experiments. Teams that adopt MLOps early save significantly on operational costs and iterate faster, creating a direct competitive advantage in markets where AI-driven decision-making is becoming the standard. Additionally, regulators in sectors like finance and healthcare are imposing stricter requirements on model traceability and reproducibility. MLOps provides the audit trail needed to demonstrate how a model reached a decision, which data it was trained on, and when it was last validated, making regulatory compliance a natural byproduct of good MLOps practices.

Common mistakes with MLOps

A common mistake is deploying models without monitoring for data drift and model degradation. Models that perform excellently at launch can deteriorate within weeks as input data changes. Always implement automatic drift detection and retraining triggers. Teams also frequently underestimate the importance of feature stores: when features are computed differently during inference than during training, training-serving skew arises that is difficult to debug and produces unreliable predictions. Another common oversight is the absence of model version control, making it impossible to roll back to a previous version when a new model underperforms. Finally, organizations regularly forget to monitor token costs and latency for LLM-based systems, leading to unexpectedly high bills and slow response times that frustrate users. A fifth common mistake is failing to document the relationship between data, model artifacts, and configuration. When production issues arise, the inability to trace which exact data version and hyperparameters produced the problematic model significantly delays diagnosis and resolution. Establish lineage tracking from data ingestion through deployment as a foundational practice. Additionally, teams that neglect end-to-end pipeline testing in staging environments frequently discover integration failures only after they reach production and affect real users.

What are some examples of MLOps?

- A fintech company that set up an MLOps pipeline to automatically retrain their fraud detection model when precision drops below a threshold, ensuring the model stays current with new fraud patterns.

- An e-commerce platform using MLOps to automatically update their recommendation model weekly with new user data, evaluate via A/B tests, and roll out via canary deployments.

- An AI startup using MLflow and Weights & Biases to track hundreds of experiments, select the best model configuration, and automatically deploy to production via a CI/CD pipeline.

- An insurance company that uses MLOps to continuously monitor their claims assessment model for concept drift. When new claim types emerge that the model fails to recognize, the system automatically triggers retraining with labeled examples of the new categories, keeping prediction accuracy above 95%.

- A healthcare organization using DVC and MLflow to maintain full reproducibility of diagnostic models. Every model version is traceable to the exact training data and hyperparameters, which is essential for audits by medical regulators and for demonstrating model reliability during certification processes.

Related terms

Frequently asked questions

We work with this every day

The same expertise you are reading about, we put to work for clients across Europe.

See what we doRelated articles

What Is DevOps? Practices, Tools, and Culture for Faster Software Delivery

DevOps unifies development and operations teams through automation, shared ownership, CI/CD pipelines, and Infrastructure as Code. Learn how DevOps practices enable reliable, frequent software releases and faster time to market.

What Is Machine Learning? How Algorithms Learn from Data to Drive Business Decisions

Machine learning enables computers to discover patterns in data and make predictions without explicit programming. It powers recommendation engines, fraud detection, natural language processing, and intelligent automation across industries.

What Is CI/CD? Continuous Integration and Delivery Pipelines for Reliable Software Releases

CI/CD automates the entire process of building, testing, and deploying code so development teams ship to production reliably, multiple times per day. Learn how pipelines work, which tools to choose, and what CI/CD delivers for your organization.

Qwik Alternatives That Ship Production Apps Today

Resumability is promising but the ecosystem is small. Five frameworks that already deliver what Qwik promises for your next production project.