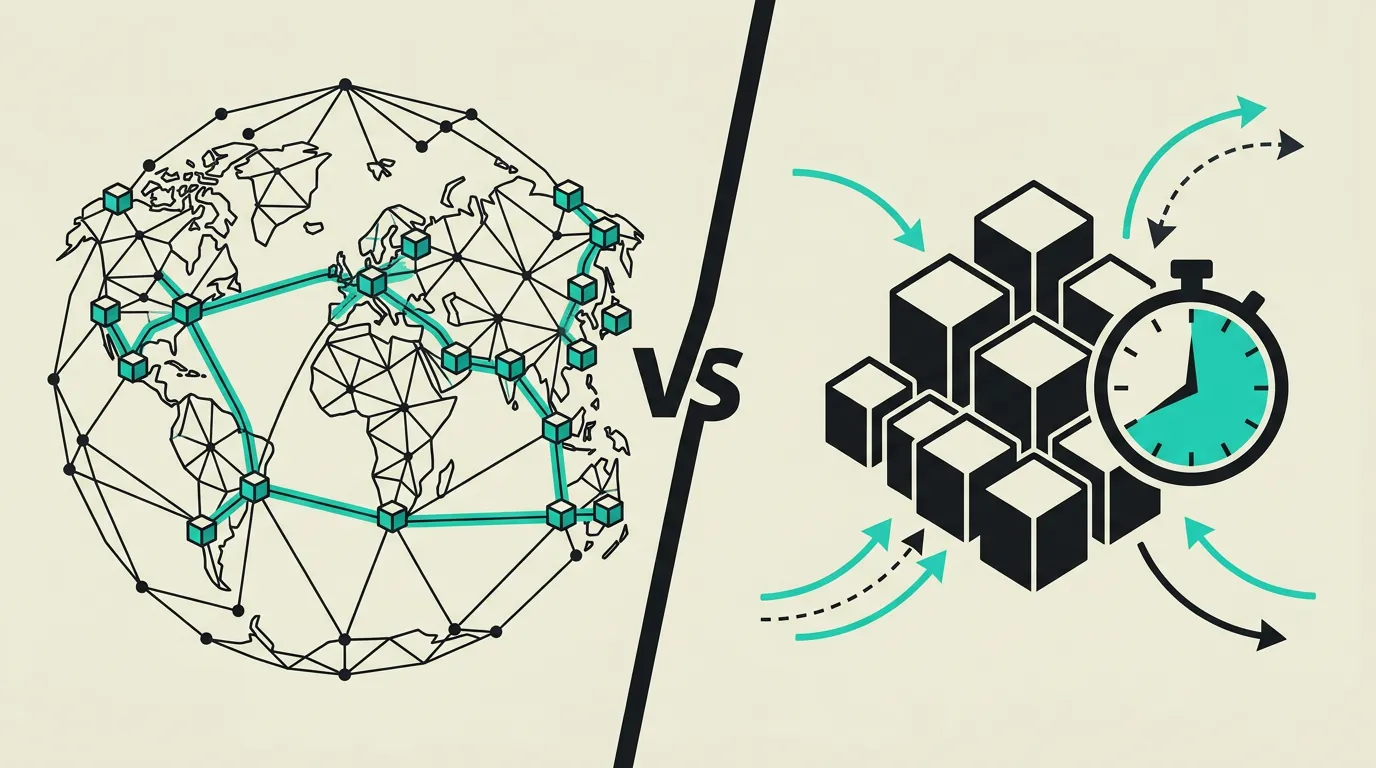

Cloudflare Workers vs AWS Lambda: Edge or Regional Compute?

Zero cold starts at 200+ edge locations or 15-minute execution times in AWS regions? Workers and Lambda take fundamentally different serverless paths.

Cloudflare Workers and AWS Lambda represent two fundamentally different approaches to serverless computing, each excelling in its own domain. Workers are unmatched in edge computing with 0ms cold starts at 300+ locations worldwide, perfect for latency-sensitive endpoints, auth middleware, and real-time personalization. Lambda offers the broadest functionality with support for virtually any programming language, 15-minute execution times, up to 10 GB memory, and access to the full AWS ecosystem of over 200 services. For API endpoints, edge middleware, and lightweight transformations, Workers is superior in speed and developer experience. For complex backend workloads, long-running tasks, and deep AWS integrations, Lambda is the only logical choice. Many organizations combine both: Workers for the edge layer and Lambda for heavier backend logic.

Background

The serverless computing market has split into two distinct camps: edge-first platforms like Cloudflare Workers that minimize global latency, and region-based services like AWS Lambda that prioritize flexibility and ecosystem depth. This split reflects a broader industry trend where more logic moves to the edge for faster user experiences while heavier backend tasks remain in central regions. Your choice depends on whether latency or feature breadth matters more to your specific use case, and whether your team has already invested in the Cloudflare or AWS ecosystem.

Cloudflare Workers

Cloudflare Workers is an edge computing platform that executes code at over 300 locations worldwide across Cloudflare's global network. Built on V8 isolates (the same JavaScript engine as Chrome), Workers deliver 0ms cold starts and sub-millisecond startup times that are impossible with container-based solutions. The platform supports JavaScript, TypeScript, Rust, and Python, and integrates seamlessly with Cloudflare's expanding ecosystem of KV Storage, Durable Objects for stateful logic, R2 object storage, D1 SQL databases, and Workers AI for inference at the edge.

AWS Lambda

AWS Lambda is the pioneer of serverless computing and the most widely used Function-as-a-Service platform in the world with millions of active functions. Lambda runs in over 30 AWS regions and supports virtually any runtime including Node.js, Python, Java, Go, .NET, and Ruby, plus custom runtimes via container images. With a maximum execution time of 15 minutes, up to 10 GB memory per function, and deep integration into the complete AWS ecosystem, Lambda is suitable for everything from simple API endpoints to complex event-driven data processing pipelines.

What are the key differences between Cloudflare Workers and AWS Lambda?

| Feature | Cloudflare Workers | AWS Lambda |

|---|---|---|

| Cold starts | 0ms: V8 isolates start instantaneously without any noticeable cold start, consistent on every request | 100ms-10s depending on runtime, memory, VPC configuration, and whether Provisioned Concurrency is enabled |

| Execution limit | Maximum 30 seconds CPU time on free plan, up to 15 minutes on Workers Unbound paid plan | Maximum 15 minutes per execution by default, suitable for long-running batch tasks and data pipelines |

| Locations | 300+ edge locations worldwide where code automatically runs close to the end user | 30+ AWS regions, manually configured per region, with Lambda@Edge as a limited edge option |

| Runtimes | JavaScript/TypeScript natively, Rust and Python via WebAssembly, no Java or .NET support | Node.js, Python, Java, Go, .NET, Ruby, and custom runtimes via container images up to 10 GB |

| Ecosystem | KV Storage, Durable Objects, R2, D1, Queues, Workers AI, and Hyperdrive for database connectivity | Full AWS ecosystem: S3, DynamoDB, SQS, SNS, Step Functions, EventBridge, and 200+ other services |

| Pricing model | Free 100K requests/day, then $0.50/million requests with predictable per-request costs | Free 1M requests/month, then $0.20/million requests plus $0.0000166667/GB-second of compute time |

| Storage options | KV for key-value (eventually consistent), R2 for S3-compatible objects with zero egress, D1 for SQLite-based SQL | Full access to S3, DynamoDB, EFS, Aurora, and every other AWS storage product via IAM roles |

| Observability | Wrangler tail for live logs, Workers Analytics, and integration with external logging via Logpush | CloudWatch Logs and Metrics by default, X-Ray tracing, and integration with Datadog, New Relic, and Sentry |

When to choose which?

Choose Cloudflare Workers when...

Choose Cloudflare Workers when your endpoints require minimal latency for users spread across the globe, when you need edge logic for A/B testing, geo-routing, auth middleware, or real-time personalization, or when your workloads are lightweight and fit within the CPU time limits. Workers are ideal for API gateways, request transformation, and content personalization. Also choose Workers when you benefit from the Cloudflare ecosystem with R2 for zero-egress storage, D1 for databases, and Workers AI for inference at the edge.

Choose AWS Lambda when...

Choose AWS Lambda when your functions need long execution times (up to 15 minutes), when you require specific runtimes like Java, .NET, or Go, or when deep integration with the broader AWS ecosystem (S3, DynamoDB, Step Functions, EventBridge) is essential for your architecture. Lambda is also the better choice for heavy compute tasks such as batch processing, video encoding, and machine learning inference that require more memory and CPU time than Workers can provide.

What is the verdict on Cloudflare Workers vs AWS Lambda?

Cloudflare Workers and AWS Lambda represent two fundamentally different approaches to serverless computing, each excelling in its own domain. Workers are unmatched in edge computing with 0ms cold starts at 300+ locations worldwide, perfect for latency-sensitive endpoints, auth middleware, and real-time personalization. Lambda offers the broadest functionality with support for virtually any programming language, 15-minute execution times, up to 10 GB memory, and access to the full AWS ecosystem of over 200 services. For API endpoints, edge middleware, and lightweight transformations, Workers is superior in speed and developer experience. For complex backend workloads, long-running tasks, and deep AWS integrations, Lambda is the only logical choice. Many organizations combine both: Workers for the edge layer and Lambda for heavier backend logic.

Which option does MG Software recommend?

At MG Software, we deliberately choose Cloudflare Workers when latency and global availability are crucial, such as for API gateways, auth middleware, geo-routing, and real-time personalization. The 0ms cold starts and growing ecosystem with R2, D1, and Workers AI make it a powerful platform for edge workloads. For more complex backend logic, we use Supabase Edge Functions or recommend AWS Lambda when deep integration with the AWS ecosystem is needed. The combination of Vercel Edge Functions for frontend and Supabase Edge Functions for backend covers most use cases our clients have without the complexity of a full AWS setup. For clients with existing AWS architectures, we design Lambda functions that integrate seamlessly.

Migrating: what to consider?

Migrating from Lambda to Workers requires rewriting to the Workers runtime, which does not provide full Node.js API support and has more limited module access. NPM packages that depend on Node.js-specific APIs (fs, net, child_process) will not work in Workers. Migration from Workers to Lambda is simpler but requires setting up API Gateway as an HTTP frontend. Be aware of differences in storage: KV/R2 versus S3/DynamoDB are not directly interchangeable and require data migration. Thoroughly test performance after migration since latency patterns will fundamentally change between edge and regional execution.

Frequently asked questions

We build production software with this stack

Our developers work with these tools daily for clients across Europe. Price estimate within 24 hours.

Discuss your project