A/B Testing Explained: From Hypothesis to Conversion Lift

A/B testing compares variants of a page or feature with real users to find what converts best. Learn how to design experiments, reach significance, and scale results.

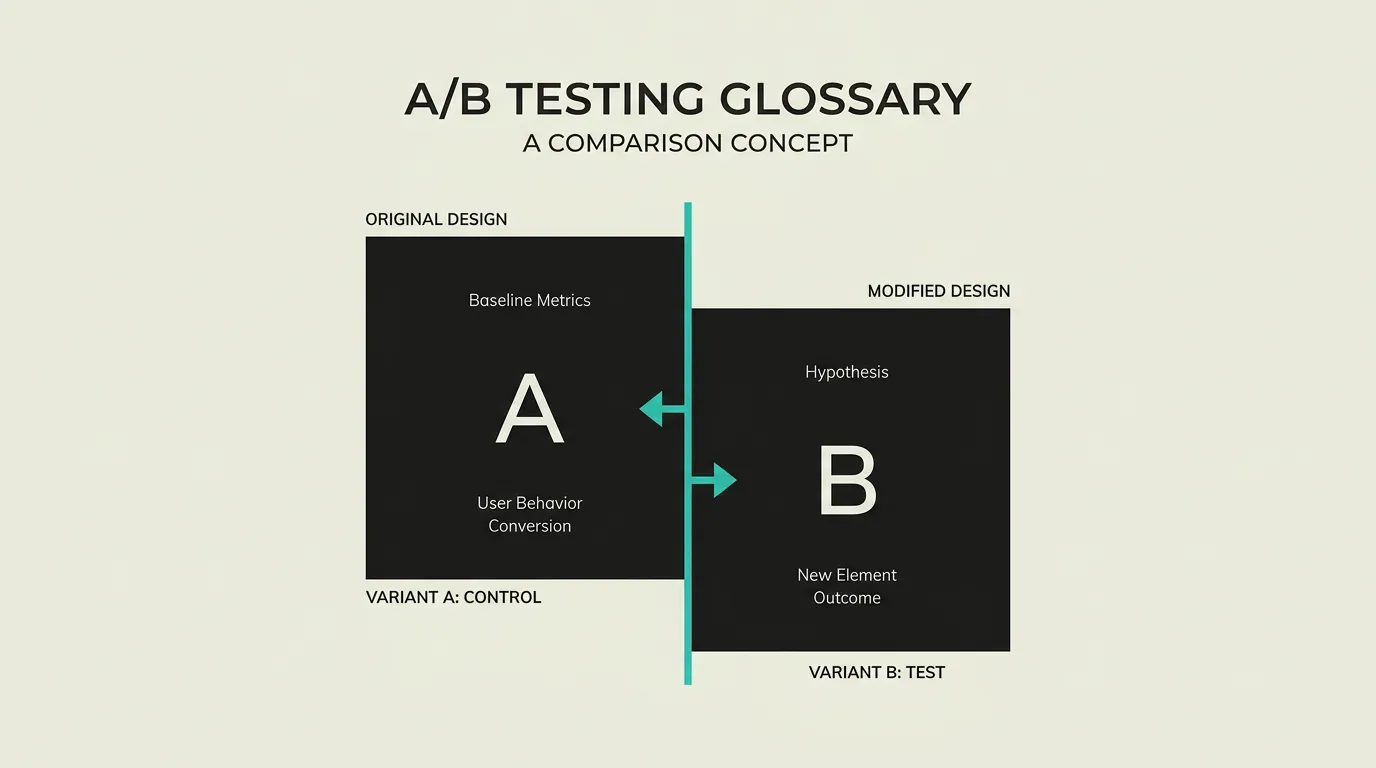

A/B testing, also known as split testing, is an experimental method in which two or more versions of a web page, email, or app element are simultaneously shown to different user groups. The purpose is to determine, through statistical evidence, which variant performs better on a predefined metric such as conversion rate, click-through rate, or average order value. By grounding decisions in data rather than opinions, A/B testing minimises the risk of expensive design mistakes and drives continuous, measurable improvement.

What is A/B Testing Explained: From Hypothesis to Conversion Lift?

A/B testing, also known as split testing, is an experimental method in which two or more versions of a web page, email, or app element are simultaneously shown to different user groups. The purpose is to determine, through statistical evidence, which variant performs better on a predefined metric such as conversion rate, click-through rate, or average order value. By grounding decisions in data rather than opinions, A/B testing minimises the risk of expensive design mistakes and drives continuous, measurable improvement.

How does A/B Testing Explained: From Hypothesis to Conversion Lift work technically?

In an A/B test, incoming traffic is randomly divided across two or more variants. Variant A serves as the control, representing the current version, while variant B (or C, D, and so on) contains the proposed change. Assignment can happen server-side or client-side. Server-side testing determines the variant before the HTML reaches the browser, which eliminates visual flicker and provides better performance. Client-side testing applies modifications through JavaScript after the page loads, making it simpler to set up but introducing the risk of flicker. Statistical significance, typically set at a 95% confidence level, establishes whether the observed difference in conversion is unlikely to be caused by chance. The required sample size is calculated beforehand using a power analysis that accounts for the expected effect size, the current baseline conversion rate, and the desired confidence level. An undersized sample produces unreliable results, while an oversized sample wastes time and traffic. Bayesian statistics offer an alternative to the classic frequentist approach. Their advantage is that they permit continuous monitoring without the so-called peeking problem, where stopping a test too early leads to false-positive conclusions. Multivariate testing extends the concept by testing multiple elements simultaneously in every possible combination, making interaction effects visible. Multi-armed bandit algorithms distribute traffic dynamically, automatically routing more visitors to the better-performing variant over time. Feature flags integrate experimentation into the deployment pipeline so tests are managed as part of the release process. Platforms such as Optimizely, VWO, LaunchDarkly, and Statsig provide end-to-end workflows for experiment management, audience segmentation, result analysis, and reporting. Guardrail metrics, secondary metrics monitored alongside the primary goal, help detect unintended side effects of a winning variant. For example, a test that boosts sign-ups might inadvertently increase churn if the experience sets wrong expectations. Mutual exclusion groups ensure that users participating in one experiment are excluded from conflicting tests, preventing interaction effects from distorting results.

How does MG Software apply A/B Testing Explained: From Hypothesis to Conversion Lift in practice?

MG Software implements A/B testing through feature flags and server-side experiments in Next.js, leveraging Edge Middleware for flicker-free variant assignment. We guide clients through formulating clear hypotheses before any experiment begins: what element is being changed, which metric is being measured, and what outcome is expected? We then set up experiments targeting call-to-action buttons, forms, landing pages, and pricing presentations. Results are analysed for statistical significance and segmented by device type, traffic source, and user cohort. After each experiment concludes, we document the outcome in a centralised experiment log so the entire team learns from past tests and avoids repeating hypotheses that have already been validated or disproven. This data-driven approach replaces internal debates based on gut feeling with evidence, consistently delivering higher conversions and deeper understanding of user behaviour.

Why does A/B Testing Explained: From Hypothesis to Conversion Lift matter?

A/B testing replaces assumptions and gut feelings with hard, measurable data. Rather than debating which design is better, you let real users reveal the answer through their behaviour. This leads to demonstrably higher conversions and prevents costly redesigns that are driven by opinions rather than evidence. Organisations that experiment systematically build a culture in which every change is treated as a hypothesis to be validated. Over time, this creates a compounding advantage: dozens of individually modest improvements that collectively produce a significant uplift in revenue and customer satisfaction.

Common mistakes with A/B Testing Explained: From Hypothesis to Conversion Lift

The most common error is stopping a test prematurely as soon as one variant looks promising. Without sufficient data, the observed difference may be pure chance, leading to flawed decisions. Another pitfall is changing too many elements at once within a single A/B test, which makes it impossible to isolate which specific change drove the result. Many teams also fail to account for seasonal patterns and weekday versus weekend differences in traffic. A test that only runs over a weekend is not representative of weekday behaviour. Finally, the importance of a clear hypothesis is frequently underestimated. Without articulating what you expect and why before the test begins, an experiment becomes an aimless gamble rather than a structured learning opportunity.

What are some examples of A/B Testing Explained: From Hypothesis to Conversion Lift?

- A SaaS company testing two variants of their pricing page: one highlighting monthly prices and one featuring annual prices. The annual variant increases average contract value by 23% because users commit to longer terms when the discount is visually prominent.

- An e-commerce site testing button colour and copy for "Add to Cart". An orange button labelled "Order Now" generates 15% more clicks than a blue button with "Add to Cart", demonstrating that urgency in microcopy lifts conversions.

- A lead generation site comparing a short form with three fields against an extended form with eight fields. The short form produces 60% more submissions, while the longer form delivers higher quality leads with a better sales conversion rate.

- A newsletter that tests two subject lines on a random 10% sample of the mailing list. The winning variant is automatically sent to the remaining 90%, boosting the overall open rate by 18% compared to the baseline.

- An online travel platform that experiments with search result ordering: price ascending versus relevance sorted. Relevance sorting leads to 9% more bookings because users find a suitable offer more quickly.

Related terms

Frequently asked questions

We work with this every day

The same expertise you are reading about, we put to work for clients across Europe.

See what we doRelated articles

What User Experience Really Means for Digital Products

UX design combines usability, user research, and information architecture to create digital products that convert. Learn how user experience drives business growth and customer loyalty.

Web Performance: Speed, Core Web Vitals, and Conversions

Web performance measures how fast your site loads and responds via Core Web Vitals (LCP, INP, CLS). Discover the direct impact on SEO rankings, conversions, and user satisfaction.

What are Feature Flags? - Explanation & Meaning

Feature flags toggle functionality on or off without deployment, enabling gradual rollouts, A/B tests, and safe trunk-based development.

Custom E-commerce Software: Headless Commerce, Fulfilment Automation and Conversion Optimisation

Traffic spikes and Black Friday should not keep you up at night. We build e-commerce platforms that auto-scale, convert and handle peak demand effortlessly, with clients typically seeing measurable checkout conversion improvement and reduced manual order handling within the first quarter.