What is Caching? - Definition & Meaning

Caching stores frequently accessed data closer to the user at browser, CDN, and server level, which yields dramatically faster page load times.

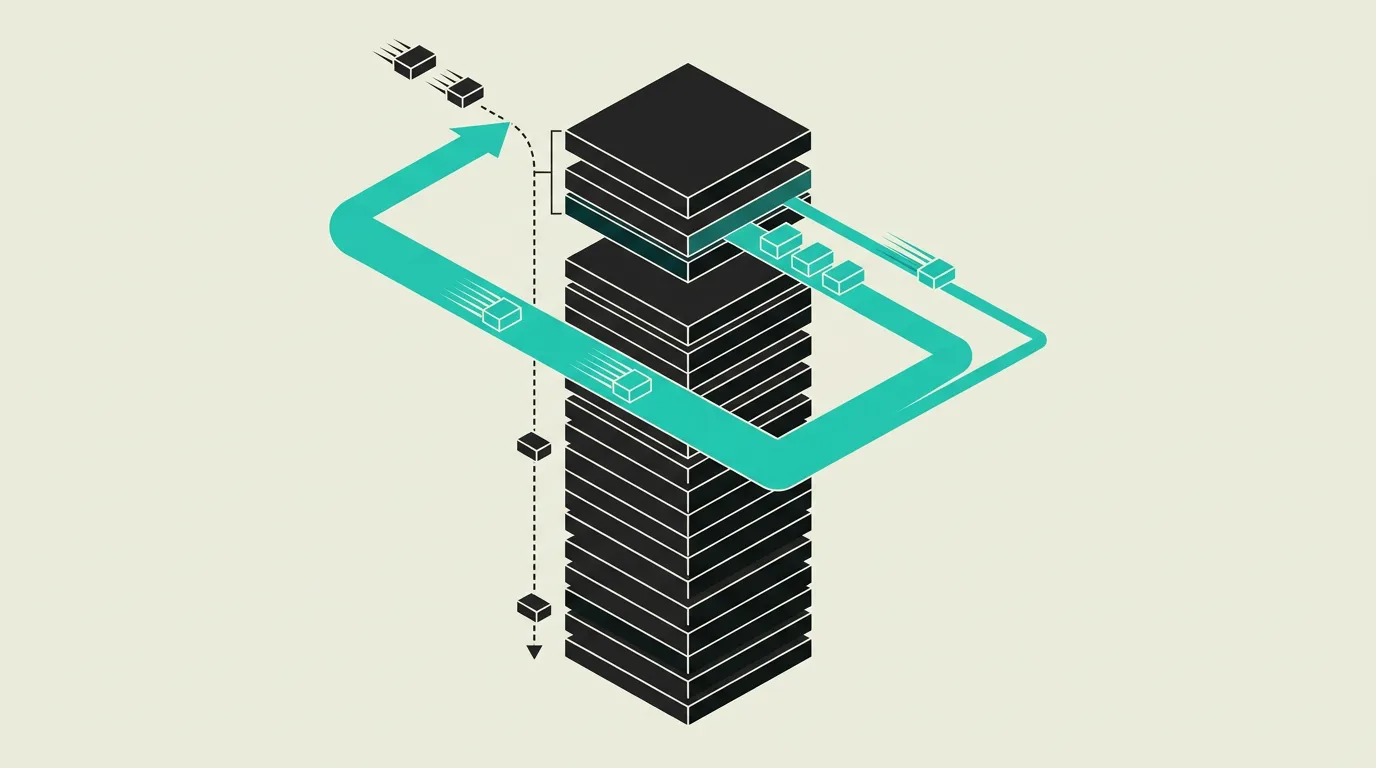

Caching is the temporary storage of data in a faster-accessible location so future requests are handled more quickly without repeatedly querying the original source. It reduces the load on databases and servers, lowers network latency, and significantly improves user experience. Caching is one of the most effective performance optimizations in software development and occurs at multiple layers, from the end user's browser to in-memory data stores on the server.

What is Caching?

Caching is the temporary storage of data in a faster-accessible location so future requests are handled more quickly without repeatedly querying the original source. It reduces the load on databases and servers, lowers network latency, and significantly improves user experience. Caching is one of the most effective performance optimizations in software development and occurs at multiple layers, from the end user's browser to in-memory data stores on the server.

How does Caching work technically?

Caching occurs at multiple layers, each with distinct characteristics. Browser caching stores static assets (CSS, JS, images, fonts) locally via Cache-Control headers (max-age, immutable) and ETag/Last-Modified headers for conditional requests. CDN caching places copies on edge servers worldwide for fast delivery, driven by s-maxage and stale-while-revalidate headers. Server-side caching with Redis or Memcached stores computed results, database queries, and API responses in memory for sub-millisecond access. Application-level caching via frameworks (Next.js ISR with on-demand revalidation, React Query with staleTime, SWR with deduplication) caches pages or API responses at the application level. The hardest challenge in caching is invalidation: determining when cached data is stale. Strategies include TTL-based invalidation (data expires automatically after a configurable period), event-based invalidation (cache is cleared when underlying data changes, via database triggers or message queue events), tag-based invalidation (grouping related cache entries and invalidating them in one operation), and stale-while-revalidate (serve cached data immediately while fresh data is fetched in the background). Cache-aside (lazy loading) is the most common pattern: the application checks the cache first, and on a miss, queries the source and caches the result for subsequent requests. Write-through caching writes to both cache and database simultaneously, guaranteeing consistency at the cost of higher write latency. Write-behind caching writes to the cache first and asynchronously to the database, which is faster but introduces risk of data loss on crashes. Cache stampede prevention via distributed locking or request coalescing ensures that during a mass cache miss, not all requests simultaneously hit the database. Consistent hashing distributes cache keys across a ring of servers so that adding or removing a node only reshuffles a fraction of keys instead of the entire cache. This prevents massive cache misses during scaling events. Cache warming proactively populates the cache before anticipated peak load, for example by prefetching popular product pages after a deployment. HTTP Vary headers drive conditional caching based on request characteristics like Accept-Language or Accept-Encoding, ensuring different representations of the same URL are cached correctly. Bloom filters can be used to quickly determine that a key is definitely not present in the cache, preventing unnecessary cache lookups and reducing load on the cache layer.

How does MG Software apply Caching in practice?

At MG Software, we implement a multi-layer caching strategy in every project. Next.js ISR caches pages at build time with on-demand revalidation for content updates. Vercel's edge cache serves static content with content-hashed URLs and long max-age headers. We use Redis for server-side caching of expensive database queries and external API responses, with TTL-based invalidation and cache-key namespacing per tenant. React Query on the client side deduplicates concurrent requests and shows cached data while fresh data is fetched. After every deployment, we automatically trigger cache warming for the most visited pages. Cache key schemas are documented in the repository so the team understands what data is cached and when invalidation occurs. For multi-tenant projects, we isolate cache namespaces per customer with automated tests that detect cross-tenant leakage. We monitor cache hit rates through dashboards to measure effectiveness and continuously optimize the configuration.

Why does Caching matter?

Caching is often the difference between an application that feels snappy and one that feels slow. By storing frequently accessed data close to the user, you reduce latency from hundreds of milliseconds to single digits. Google's research shows that every additional 100 milliseconds of load time can reduce conversion rates by 1%. A well-configured caching strategy reduces database load by 80 to 95 percent, yielding direct savings on infrastructure costs. For applications with global users, CDN caching shortens latency from hundreds of milliseconds to tens, regardless of the geographic distance to the origin server. This translates directly into better Core Web Vitals scores, higher Google rankings, lower infrastructure costs, and better conversion rates. For high-traffic businesses, a solid caching strategy is the difference between a stable application and one that buckles under peak load.

Common mistakes with Caching

Keys are vague like user-data so tenants overwrite each other's cached data. TTLs are days long for prices that change hourly, causing users to see incorrect amounts. After deploys stale JavaScript persists because nobody purges the CDN or uses content-hashed URLs. Write-through paths desync from the database on partial failures and timeouts. A shared cold key triggers a thundering herd with no locking or request coalescing. Cache hit rates are not monitored, so nobody knows whether the cache is actually effective. Cache warmup is missing after deployments, so the first hundreds of users experience slow responses while the cache rebuilds. Serialization overhead is underestimated: large objects are cached as JSON when more compact formats like MessagePack would double cache memory efficiency.

What are some examples of Caching?

- A news website using Next.js ISR to cache article pages and update them via on-demand revalidation when an editor publishes content through the CMS, so articles load instantly and are always current without requiring full rebuilds.

- An e-commerce platform using Redis to cache product catalog queries with a 5-minute TTL, so the database processes only a fraction of requests and product pages consistently load in milliseconds, even during peak sales events.

- A web application using stale-while-revalidate cache headers so users immediately see cached content while fresh data is fetched in the background, resulting in instant page loads without stale content.

- A SaaS dashboard using React Query to cache API responses client-side with a 30-second staleTime, making page navigation feel instant and deduplicating repeated requests.

- A multi-tenant platform prefixing Redis cache keys with tenant IDs so cached data is isolated per customer and cache invalidation for one customer has no impact on another.

Related terms

Frequently asked questions

We work with this every day

The same expertise you are reading about, we put to work for clients across Europe.

See what we doRelated articles

What is Redis? - Definition & Meaning

Redis stores data in memory for microsecond access times, which makes it indispensable for caching, sessions, real-time leaderboards, and pub/sub messaging.

What is a CDN? - Definition & Meaning

A CDN serves web content from edge locations worldwide, dramatically reducing load times and offloading traffic from your origin server.

Redis vs Memcached: Rich Data Types or Pure Caching Speed?

Memcached does one thing brilliantly: caching. Redis adds pub/sub, streams, persistence and more. Find out when simplicity beats versatility.

WebAssembly Explained: Running Native Code in Your Browser

WebAssembly (Wasm) compiles C++, Rust, and Go code to run in the browser at near-native speed. Learn how Wasm works, when to use it, and what it enables.